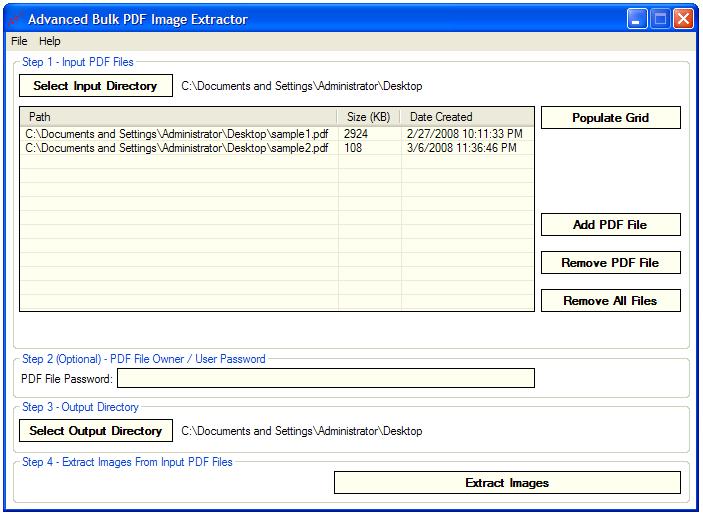

Now we need to actually create our Lambda function: once again the official documentation is our friend if we have never worked with AWS Lambda before. We copy the ARN of our freshly created topic and insert it in the script above in the variable SNS_TOPIC_ARN. When we have finished, we should have something like this: It is well explained how to do so in the official documentation. We need therefore to create an SNS topic. We also ask it to notify us, when it has finished its work, sending a message over SNS. You can find a copy of this code hosted over Gitlab.Īs you can see, we receive a list of freshly uploaded files, and for each one of them, we ask Textract to do its magic. The body of the function is quite straightforward:Ģ0 NotificationChannel=) We can leverage the S3 integration with Lambda: each time a new file is uploaded, our Lambda function is triggered, and it will invoke Textract. The first part of the architecture is informing Textract of every new file we upload to S3. Official documentation explains how to create S3 buckets. Since I love boring solutions, for this tutorial I will call the two buckets textract_raw_files and textract_json_files. We could also use the same bucket, theoretically, but with two buckets we can have better access control. S3 bucketsįirst of all, we need to create two buckets: one for our raw file, and one for the JSON file with the extracted test. I strongly suggest therefore to create all the resources in just one region, for the sake of simplicity. While I am writing this, Textract is available only in 4 regions: US East (Northern Virginia), US East (Ohio), US West (Oregon), and EU (Ireland). Since a picture is worth a thousand words, let me show a graph of this process. The Lambda function will also publish the state over Cloudwatch, so we can trigger alarms when a read was unsuccessful. The SNS topic will invoke another Lambda function, which will read the status of the job, and if the analysis was successful, it downloads the extracted text and save to another S3 bucket (but we could replace this with a write over DynamoDB or others database systems)

This is the process we are aiming to build:Ī trigger will invoke an AWS Lambda function, which will inform AWS Textract of the presence of a new document to analyze ĪWS Textract will do its magic, and push the status of the job to an SNS topic Let’s see how to use AWS Lambda and SNS to automatize all the process! Overview of the process Wouldn’t be super cool to just drop files in an S3 bucket, and after some minutes, having their content in another S3 bucket? In that case, we need to invoke some asynchronous APIs, poll an endpoint to check when it has finished working, and then read the result, which is paginated, so we need multiple APIs call. Updated on January 4th, 2023: removed references to SQS, that is not actually used in the proposed implementation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed